May

11

A.I. in the Ditch

I take no credit (or blame) for what follows. It is the result, following a discussion on Facebook (which I was not involved in), of the reading, observation, and analysis by a friend of mine. I’ve mentioned him before on a couple other posts. That friend is the inimitable Eriku Mironasu (aka ECM), and the exposition below is in his incomparable style. (Or, is it “incomparable Eriku” and “inimitable style”? I get confused.) Anyway, as you may have guessed, the topic is artificial intelligence and the unlikely predictions by philosophical materialists / scientific reductionists that the machines will soon outmatch (and outwit?) us humans in brainpower.

I take no credit (or blame) for what follows. It is the result, following a discussion on Facebook (which I was not involved in), of the reading, observation, and analysis by a friend of mine. I’ve mentioned him before on a couple other posts. That friend is the inimitable Eriku Mironasu (aka ECM), and the exposition below is in his incomparable style. (Or, is it “incomparable Eriku” and “inimitable style”? I get confused.) Anyway, as you may have guessed, the topic is artificial intelligence and the unlikely predictions by philosophical materialists / scientific reductionists that the machines will soon outmatch (and outwit?) us humans in brainpower.

Eriku does not share my Christian worldview, as he is a deist, but he does share some of my reservations and inclinations on the subject — though, he has read a bit more on it than I have. So, when he posted this a couple (late-)nights ago, I thought you’all might be interested on his take on this subject. (Plus, of course, there are references to Terminators, cats, and even an elder god, and those are always fun.)

Ready?

—

WARNING: This is well beyond the attention span of 95% of modern humans — you have been warned!

So, I’m sitting here and this bit about how the human mind can’t be adequately modeled on a computer is kinda eating at me, so let’s take a quick journey through the modern state of machine intelligence and/or consciousness and see what shakes loose, shall we?

First, let’s take one of the major assumptions of AI and consciousness researchers from the materialist camp (I’m not going to get into dualist versus non-dualist — and everything in between — because I’ll be here all night, so those of you that know what I’m talking about will just have to put up with the shorthand!): that with sufficient computing power, consciousness and/or intelligence will ‘spontaneously’ self-arise as a by-product of having sufficient ‘computing units’. <—more shorthand (see: Yudowsky, whose ideas have been promulgated by others that are much more popular).

What are ‘computing units’? Things like neurons or synapses or dendrites in the human brain, and their silicon analog, transistors. (This is an imperfect analogy because it makes *a lot* of assumptions, but you’re just going to have to take my word for it.)

Another way of putting this is to state that the medium doesn’t matter — it can be neurons, transistors, or the black heart of Cthulhu — as long as it’s capable of processing information it can, in theory, become conscious with enough ‘stuff’. (Which then leads us down the rabbit hole of “well, what do you mean by ‘information’?” and at which point I punch you in the face.)

The notion then becomes, if Yudowsky and co. are correct, that we should see conscious behavior from computers if/when we have sufficient processing power to match the processing power in the human brain.

So…

The human brain has 1.5*10^14 synaptic connections (this is a guesstimate! I can’t stress this enough, since we really don’t know what that number is, but we’re going to use this for argument’s sake), which allows ~4.5*10^16 bit operations/second (again! guesstimate+wild speculation!), so how does this stack up to the GPUs and CPUs that are sitting on your desktop?

A modern GPU or CPU probably falls short, in bit operations (this is just the smallest, most elementary unit of processing by a *computer*, btw), by a factor of about 100. That’s one GPU. But when we apply the magic of Moore’s Law (computing power doubles roughly every 24 months, but has been more like every 18 months for a long-time running), that shortfall between your brain and that GPU is erased in, tops, twenty years, which is where guys like Kurzweil get their estimates for the “Singularity”.

(At this point you should be asking yourself why massive networks of computers — see “super computers”, not your home network — aren’t, therefore, self-aware — assuming you have no life and don’t get out in the sun enough — and the answer to that is, well, uh…they haven’t. But they have some really great excuses that all involve adding more processing power which, as we’ll see, isn’t the panacea that they hope it is.)

So that means, assuming Yudowsky’s supposition is correct, we should see self-aware, intelligent, Terminators hell-bent on human destruction no later than the time I’m prepping for retirement in earnest. (*Assuming* we are correct about a lot of things we’re really just guessing about, but let’s grant that.)

The great thing about that is this is a semi-hard prediction, so prepare yourselves now for when this does not come to pass in 20-years, because…

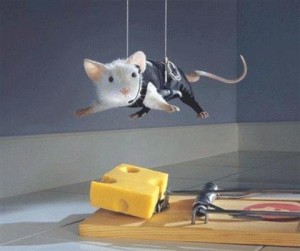

The problem here is that we don’t even see computers as ‘intelligent’ as the average mouse yet and probably not as ‘intelligent’ as (literally) brainless bacteria. For example, a mouse can *easily* and *instantly* discern that a cat is going to eat them, this is very bad, and they know to run in the opposite direction as far as their itty-bitty feetses can carry them (unless that cat is owned by Rob Howard, in which case you’ve got it made). [Editor’s Note: As I understand it, Rob’s cat is getting on in years, seriously rotund, not all that bright, and only recently “met” her first mouse. Slow (in both ways) cat = safe mice.] Got that? A *mouse* can instantly discern a looming threat and make the necessary, if primitive, calculation that the $#!t is about to hit the fan and they better get out of there NOW if they’d like to continue being a mouse. (I realize you realize this, of course, but I’m building up to something here…)

Compare and contrast that to modern machine ‘intelligence’: what a mouse or a cat or a person finds utterly and completely trivial and banal — recognizing a threat from afar, be it a cat, or a dog, or a human-killing machine from the future hell-bent on annihilating all of humanity — is a Herculean task for even the most powerful computers on the planet.

Here’s how modern “AI” works (in very over-simplified terms): it ‘brute forces’ all the aspects of what intelligence entails in things like mice and men — there is no ‘thinking’ and there are no leaps in intuition involved. (And here’s a mind-blower: since we don’t know how intelligence works — more on that in a bit — it’s entirely possible that if the notion of, for example, quantum consciousness is true, we’ll never get there.)

For example, when you scan a crowd and see a gun-toting, chrome-skinned killing machine from the future you run like hell, but a computer trying to discern something as banal as picking a face from a crowd — never mind a roid-riddled super-humanoid killing machine — is almost hopelessly stymied in all but the most ideal situations, because it isn’t actually thinking in any meaningful sense — it’s merely referencing a massive database of photographs, for example, and going through *all* of them in the hopes of finding a ‘hit’. This is exactly how a computer beats a Jeopardy or chess champion. They aren’t making any logical connections or ‘thinking’ the move through; they’re literally running *all* the moves possible, out to the edge of infinity, or scanning a massive database of facts to defeat the Jeopardy dork (and in the case of Jeopardy, this ‘smart’ machine needs the questions fed to it, because, obviously, it can’t understand information-rich language). At heart, this isn’t really any different than how the Internet works, and nobody is ever going to convince anyone that the Internet is intelligent, no matter how well it returns your search for “cats in party hats” photos.

Now, in order to become more ‘intelligent’, a computer needs more silicon, so that it can brute force your face off: now it isn’t just referencing a database of photos, but it’s running different lighting scenarios (the better to match up a face with dusk-like conditions in the real world) and, perhaps, building accurate 3D models of the skull in question, then matching that against a gazillion other 3D models it built (the better to gauge a face that isn’t turned in precisely dead-on fashion). Does the accuracy go up? Yep, of course. Is there any ‘intelligence’ involved? Nope! You’re just adding more and more sweaty hands to the job, but it’s not getting any more efficient (read: intelligent) in the process, no matter how many bit operations you throw at it. Meanwhile, Mr. Mouse, having recognized the cat instantly, is back in his hole eating your cheese and laughing about it all the way, and the cat is fuming about how he’ll get that little bastard next time and Rob is like “What is wrong w/ you? You had him and you let him go!”

(I can’t stress this enough: unless you firmly believe that consciousness is nothing more than the additive effects — or, if you prefer, a byproduct — of computing units, what computers do now that we deem ‘intelligent’ is anything but, and simply adding more processing power isn’t going to, de novo, ‘magic’ consciousness into existence because, again, we don’t even see computers, with enough horsepower to brutalize Jeopardy and chess grandmasters *under ideal situations*, able to reliably match up a face in low lighting conditions, which is something an actual horse can do w/ ease, and nobody this side of PETA is going to convince anyone that horses are super-intelligent organisms.)

(I can’t stress this enough: unless you firmly believe that consciousness is nothing more than the additive effects — or, if you prefer, a byproduct — of computing units, what computers do now that we deem ‘intelligent’ is anything but, and simply adding more processing power isn’t going to, de novo, ‘magic’ consciousness into existence because, again, we don’t even see computers, with enough horsepower to brutalize Jeopardy and chess grandmasters *under ideal situations*, able to reliably match up a face in low lighting conditions, which is something an actual horse can do w/ ease, and nobody this side of PETA is going to convince anyone that horses are super-intelligent organisms.)

Now, of course, some true believers will chime in and state that what’s important is the software running on the hardware. The problem is, the software is only as good as the person writing it, and that person is only as good at it as their grasp on what it is they’re trying to get the computer to do. But since we have *no idea* what consciousness or intelligence actually are (we have endless theories, tho!), how do you tell a computer what it is and how it should go about ‘thinking’? This is why any ‘advancement’ (remember: sweaty hands is not the same thing as building a better backhoe) in machine intelligence comes through brute-forcing it via additional computing units, because we have no way of telling a machine how to act ‘intelligently’ or ‘consciously’ because we have no comprehension of what these mean in concrete terms.

And that, my friends, is my quick, over-tired, summation on why the Singularity and hyper-intelligent killing machines, no matter how much silicon we throw at them, will ever become intelligent per Yudowsky and Kurzweil et al.

***THIS RIGHT HERE IS THE REAL PROBLEM RENDERING EVERYTHING I’VE SAID BASICALLY MOOT***

We need a real breakthrough in what intelligence *is* (not what we *think* it is, or what the hip kids tell us it is) and it’s an exceedingly, freakishly difficult problem that is, perhaps, insoluble.

Let me state this again: we have no idea what intelligence or consciousness is beyond the most threadbare, banal, definitions and until we apprehend what these seemingly immaterial objects are, we can’t very well tell a computer how to act that way…unless you believe consciousness is a little more than the additive process of enough processing power* but, of course, this is an assertion with no experimental proof, so we won’t actually know it’s true until we see a computer at least as intelligent as a mouse and that we know that, in fact, what we are seeing is intelligence and not just an ‘echo’ or ‘artifact’ of it which requires we know what intelligence actually is which….ugh.

Without this breakthrough, we’ll be sitting here in 20 years with much faster computers that can think about as well as the average cat pic aficionado on the Internet.

***THUS ENDS THE REAL PROBLEM***

(Even then, of course, there is no guarantee that we can create such a machine even if we fully understand what it means to be conscious.)

Note: AI, as a discipline, has basically been in this ditch for 30+ years and shows no signs of getting out because of the problems thumbnailed herein.

* The nice thing about this somewhat tautological circumlocution is that you can basically move the goalposts out indefinitely by simply stating “well, clearly intelligence isn’t just the additive effects of synapses, but if you add in this, that, and the next thing, it’ll eventually pop up, we swear!” <–and this might be true, but here we go again: if you have a theory and you constantly have to massively adjust the parameters *after the fact*, you sound like little more than one of those death-cult loons who kept revising the end of the world more and more remote in time because you were right, except that you forgot this…and this…and this…and this…and…and…and…

Recommended Reading:

Demystifying Machine Intelligence

“You’re Smarter Than You Think”

—

Any questions? Comments? Brain aneurysms? Please direct them to: Eriku the Incomparable, c/o “A View from the Right”, and I’ll make sure he gets the message. Thanks, the Management.